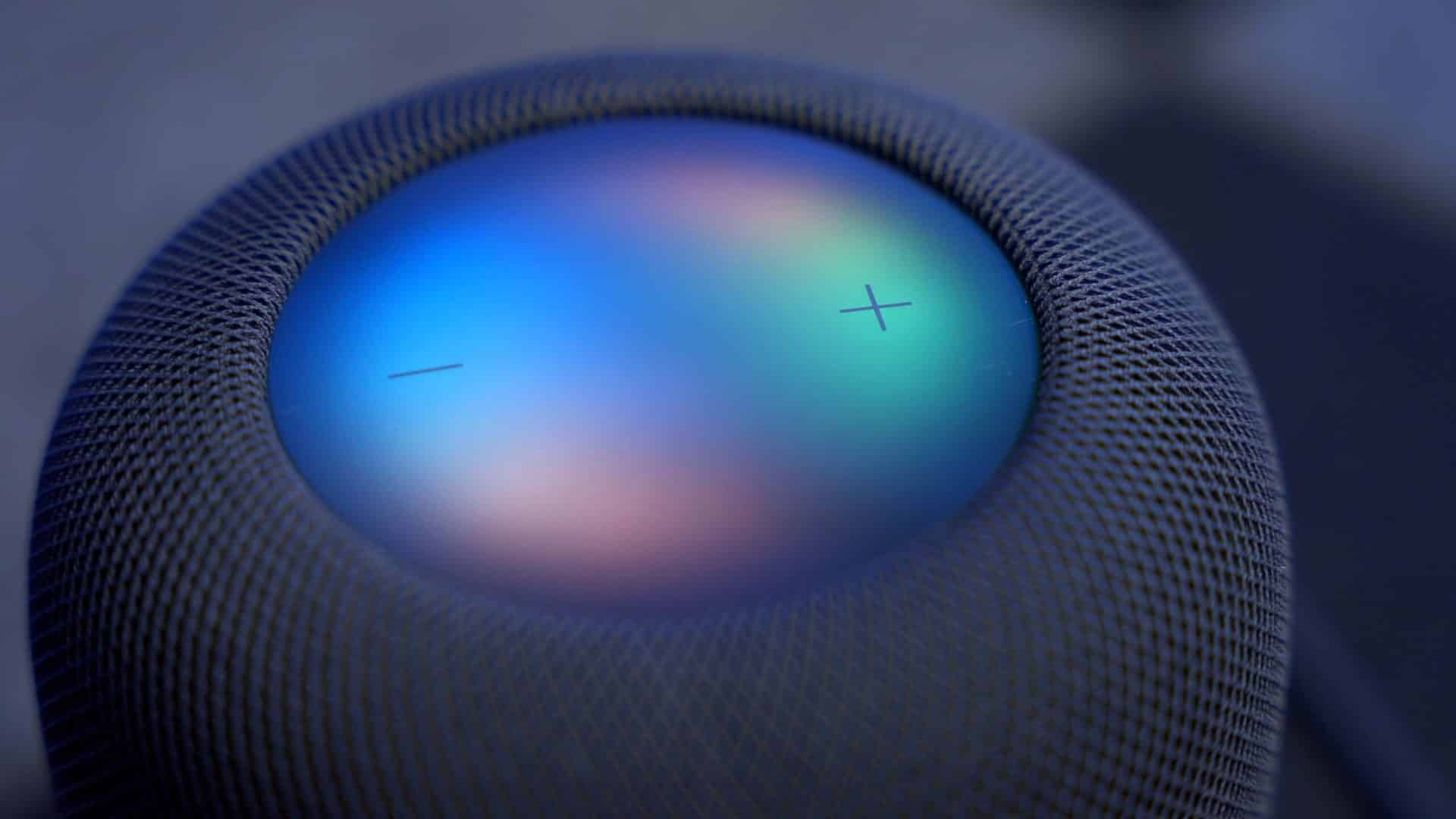

Gemini Siri Update is about to become one of the most meaningful software upgrades Apple has delivered to the iPhone in years. With iOS 26.4, scheduled for release in late February, Apple will integrate Google’s Gemini AI models directly into Siri’s intelligence layer, marking the first public step in a deeper transformation of Apple Intelligence. This move is not about turning Siri into a generic chatbot. It is about making the assistant finally understand language, context, and intent at a level that matches how people actually speak.

For more than a decade, Siri has been built around commands and triggers. You asked for things in specific ways, and the system translated them into actions. That model worked for alarms, timers, and simple queries, but it broke down when conversations became more natural or complex. The arrival of Apple Gemini Siri changes that foundation. Instead of only mapping commands, Siri will be able to reason about language using Gemini’s large-scale models while still operating inside Apple’s tightly controlled privacy framework.

This integration comes at a time when the entire tech industry is being reshaped by large language models. Companies like OpenAI, Google, Microsoft, and xAI are racing to build massive AI systems trained on trillions of words. Apple’s strategy has been different. Rather than pushing everything to the cloud, Apple is combining on-device models, private cloud processing, and selected external partnerships. Gemini is now becoming one of the engines inside that hybrid system.

The New Siri: How Gemini Will Work

When you speak to Siri after iOS 26.4, the request will still be captured and processed locally first. Tasks like wake-word detection, speech recognition, and personal data filtering happen on the device, just as they do now. Only when a request requires deeper language reasoning does it move into Apple’s Private Cloud Compute environment, where Gemini models operate inside isolated Apple-controlled servers.

This design is critical. Gemini is not running on Google’s infrastructure when used by Siri. Apple deploys the models in its own secure environment, ensuring that user data is not mixed with external systems or used to train Google’s models. This is how Apple can raise Siri’s intelligence without weakening its privacy promises.

From a user perspective, the benefit shows up in conversation quality. Apple Gemini Siri will be able to handle multi-step instructions, follow-up questions, and more descriptive requests. You will no longer need to restate context as often. If you ask Siri to summarize something and then refine it, the assistant will understand that you are continuing the same task.

What iOS 26.4 brings to Siri

The February release is not designed to turn Siri into a full chat interface. Instead, it focuses on upgrading the underlying language layer. That means better understanding of what you say, longer and more coherent responses, and improved handling of tasks that involve writing, summarizing, or connecting information.

Apple has already been using its own smaller models for things like transcription, text prediction, and command execution. Gemini fills the gap for higher-level language reasoning. This allows Siri to generate more natural replies, understand nuance, and interpret longer requests without falling apart.

Features tied to Apple Intelligence, such as rewriting text, summarizing notes, and generating short messages, are expected to become noticeably more reliable after the update. The goal is not flashy demos but consistency. When you ask Siri to do something with words, it should finally feel dependable.

Why Apple Chose Google Gemini

Apple has been developing its own foundation models for years, but the company also recognizes the scale required to compete with the largest AI platforms. Gemini represents one of the most advanced model families available, trained across text, code, and multimodal data.

By licensing Gemini and running it inside Apple’s private infrastructure, Apple gains access to that capability without giving up control of user data. This mirrors Apple’s approach with OpenAI, where ChatGPT is offered as an optional tool for certain tasks while core system functions remain inside Apple’s ecosystem.

The Gemini partnership is not about replacing Apple’s AI. It is about strengthening it. Apple continues to use its own models for personal context, device control, and privacy-sensitive operations. Gemini handles the heavy lifting for language generation and understanding.

What This Means for Apple Intelligence

Apple Intelligence is built as a layered system. At the bottom are on-device models that handle immediate tasks. Above that is Apple’s Private Cloud Compute for workloads that need more power but still require privacy. Gemini now sits inside that cloud layer as one of the engines that produces language.

This structure allows Apple to offer advanced AI without turning the iPhone into a thin client. You still get fast responses, offline capabilities for many tasks, and strong data protection. The Apple Gemini Siri upgrade is about closing the gap between Apple’s assistant and the conversational AI systems people have come to expect elsewhere.

What Comes Next After February

Apple has already hinted that this is only the beginning. Later in 2026, a more conversational version of Siri is planned, one that can maintain ongoing dialogues, provide proactive suggestions, and operate more like a true digital assistant.

The iOS 26.4 update lays the groundwork for that future. By moving Siri onto a modern foundation-model layer now, Apple can add more advanced features later without rebuilding the entire system again. Every improvement that follows will build on the same Gemini-powered core.

When iOS 26.4 arrives, Apple Gemini Siri will not suddenly feel like a different product, but it will feel more capable. Requests that used to confuse Siri should be handled more smoothly. Conversations should feel less rigid. Writing and summarization tasks should be more accurate.

For many people, this will be the first time Siri feels like it truly understands what they are saying. And because of Apple’s privacy-first architecture, that intelligence comes without the trade-offs that often accompany cloud-based AI systems.